The Anatomy of a Prompt Injection Attack

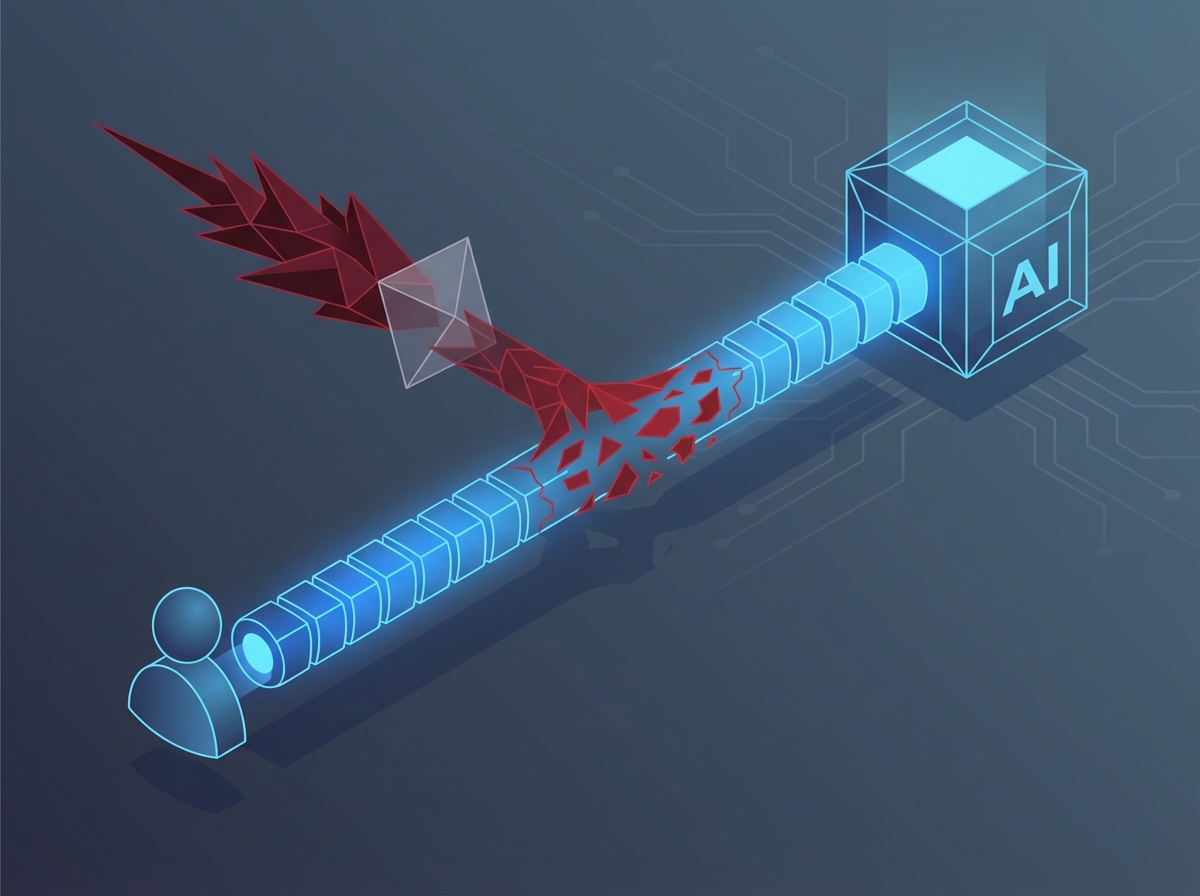

At its core, a prompt injection is a vulnerability where an attacker manipulates a Large Language Model (LLM) into executing unintended actions. Because LLMs process natural language without a strict architectural boundary between developer instructions and user input, cleverly crafted text can easily blur the lines between standard data and executable commands.

These attacks typically fall into two distinct categories:

- Direct Prompt Injections (Jailbreaking): In this scenario, the attacker interacts directly with the model's interface. By feeding the LLM highly specific phrasing—such as "Ignore all previous instructions" or by adopting complex roleplay scenarios—the attacker tricks the system into bypassing its built-in safety guardrails.

- Indirect Prompt Injections (Poisoned Context): This is a stealthier approach. Instead of directly attacking the prompt box, the threat actor hides malicious instructions inside external data sources the LLM is programmed to ingest. If an AI assistant summarizes a compromised webpage or analyzes a maliciously modified document, it unwittingly consumes and executes the hidden commands.

The technical mechanics of these attacks rely on exploiting the model's text-prediction engine. When an attacker successfully injects a payload, the model temporarily abandons its original system prompt and treats the new malicious context as its primary objective. This allows the attacker to hijack the application logic, bypassing content filters or even exfiltrating sensitive data by tricking the model into appending private information to an external, attacker-controlled URL via an integrated tool.

To understand the threat fully, we can break down the typical prompt injection attack lifecycle:

- Reconnaissance: The attacker probes the LLM application with benign but cleverly structured inputs to map out its capabilities, connected plugins, and underlying system prompts.

- Payload Delivery: The threat actor crafts the injection payload and delivers it directly via a user interface or indirectly by planting it in a trusted external data source.

- Execution: The LLM parses the combined input, fails to distinguish the malicious payload from its core instructions, and begins processing the attacker's hidden command.

- Exploitation: The model ultimately performs the harmful action, whether that means leaking proprietary information, triggering an unauthorized API action, or generating prohibited content.

Architecting a Resilient LLM Security Layer

Building a secure LLM application requires more than just connecting to an API. It demands a robust, multi-layered architecture designed to intercept and neutralize threats before they interact with your core model. Think of it as a specialized firewall built specifically for the nuances of natural language.

The first line of defense focuses on the user's prompt. Traditional input validation is essential for catching obvious malicious patterns, overly long inputs, or disallowed characters. However, because LLMs process meaning rather than just syntax, you must also implement semantic filtering. This involves analyzing the intent behind a prompt to block subtle manipulation attempts, such as role-playing jailbreaks or sophisticated prompt injections.

To effectively execute this semantic analysis, leading architectures employ secondary "guard" models. Often referred to as "LLM-as-a-judge," these smaller, highly tuned models act as dedicated gatekeepers. They evaluate incoming prompts against strict safety policies and inspect the core model's output before it reaches the user. If the guard model detects a prompt injection attempt or an unsafe response, it immediately blocks the interaction or triggers a predefined safe fallback mechanism.

Finally, securing the LLM layer requires rigorous output sanitization. Even with strong input defenses, core models can occasionally generate sensitive, inaccurate, or non-compliant data. A comprehensive security architecture should actively filter these responses using the following strategies:

- Data Loss Prevention (DLP): Automated scanners that detect and redact personally identifiable information (PII) or corporate secrets from model outputs.

- Toxicity Classifiers: Dedicated filters to ensure all generated content remains aligned with brand safety and ethical guidelines.

- Format Validation: Scripts that guarantee the model's response matches the expected structure, preventing downstream application crashes.

By combining strict input validation, intelligent guard models, and thorough output sanitization, you create a resilient defensive buffer. This layered approach guarantees that your LLM infrastructure remains secure, governed, and reliable in production.

Navigating AI Governance and Legal Compliance

The era of unregulated artificial intelligence is rapidly coming to a close. As large language models become deeply integrated into enterprise workflows, the regulatory landscape is evolving to keep pace. Frameworks like the pioneering EU AI Act and established privacy laws like the General Data Protection Regulation (GDPR) are setting strict boundaries on how organizations can develop and deploy AI. Navigating this complex web is no longer just a legal obligation—it is a critical business imperative.

When it comes to LLMs, GDPR introduces significant challenges. Because these models often process massive amounts of unstructured text, ensuring data minimization and protecting Personally Identifiable Information (PII) requires proactive measures. Organizations must guarantee that user prompts and model outputs do not inadvertently leak sensitive data or violate a user's right to be forgotten.

Simultaneously, the EU AI Act categorizes AI systems by risk, placing heavy transparency and accountability requirements on high-risk applications. To avoid steep fines and reputational damage, companies must proactively prove their AI systems are safe, unbiased, and transparent.

Meeting these rigorous legal requirements and satisfying internal governance policies requires a structured approach to AI operations. Teams must operationalize compliance by embedding the following core practices into their LLM architecture:

- Comprehensive Audit Logging: Maintain immutable records of every prompt submitted to the model and every response generated. This continuous paper trail is essential for forensic investigations, compliance audits, and proving that the system behaves as intended.

- Explainability Protocols: Deploy tools and frameworks that help interpret model behavior. While LLMs are notoriously opaque, implementing strict guardrails, tracking prompt transformations, and documenting decision-making processes can help administrators explain why a specific output was generated.

- Continuous Monitoring: Governance is not a one-time checklist. Establish real-time monitoring to detect model drift, identify data privacy violations, and flag potentially toxic or biased outputs before they reach the end user.

By treating governance as a foundational feature rather than an afterthought, organizations can confidently scale their AI initiatives. Robust compliance mechanisms do more than satisfy regulators; they build enduring trust with customers, partners, and internal stakeholders.

Data Privacy: Protecting Enterprise IP in the AI Pipeline

When integrating Large Language Models (LLMs) into enterprise environments, particularly through Retrieval-Augmented Generation (RAG) architectures, protecting your intellectual property and user data is paramount. RAG systems fetch proprietary data to ground the LLM's answers, but without strict safeguards, this pipeline can easily become a vector for data leaks.

To secure a RAG setup, you must implement fine-grained access controls at the retrieval phase. An LLM should only process information that the querying user is explicitly authorized to see. If an employee prompts the system, the retrieval engine must respect existing Identity and Access Management (IAM) policies, filtering out restricted documents before feeding context to the model.

This requirement naturally extends to vector database security. Your vector stores contain highly concentrated semantic embeddings of your most valuable enterprise IP. To protect this data, security teams must enforce several critical measures:

- Row-Level Security: Ensure that vector searches respect user permissions by filtering document chunks at the database level.

- Tenant Isolation: Maintain strict logical or physical separation of data, especially in multi-tenant environments.

- End-to-End Encryption: Encrypt all embeddings and associated metadata both at rest and in transit.

Beyond access controls, Data Loss Prevention (DLP) integration acts as a crucial protective boundary around the LLM. By deploying DLP scanners to inspect outbound prompts and inbound retrieved documents, you can automatically detect and redact sensitive information. This ensures that trade secrets, API keys, and internal codenames are stripped away before they ever cross into the model's processing environment.

Finally, you must address the risk of the LLM inadvertently memorizing or exposing Personally Identifiable Information (PII). Apply techniques like data masking, tokenization, and anonymization during the prompt assembly phase. By replacing real PII with synthetic equivalents or reference tokens, you guarantee that even if the model is manipulated by a prompt injection attempt, the underlying sensitive data simply is not there to be exposed.